Introduction

![]() I created a couple of straightforward libraries to be used in almost every project. So evidently these libraries are a good candidate for NuGet. This will decouple the libraries from the projects that they are used in. It also forces the Single Responsibility principle because every NuGet package can be used on its own, with only dependencies on (very few) other NuGet packages.

I created a couple of straightforward libraries to be used in almost every project. So evidently these libraries are a good candidate for NuGet. This will decouple the libraries from the projects that they are used in. It also forces the Single Responsibility principle because every NuGet package can be used on its own, with only dependencies on (very few) other NuGet packages.

Creating the packages for one version of .NET is quite straightforward, and well-documented. But the next request was: “can you upgrade all our projects from .NET 4.5.2 to .NET 4.6.1, and later to .NET 4.7?”.

The plan

We have over 200 projects that preferably all are compiled in the same .NET version. So clicking each project open, change it’s version, and compile it is not really an option…

<PropertyGroup>

<Configuration Condition=" '$(Configuration)' == '' ">Debug</Configuration>

<Platform Condition=" '$(Platform)' == '' ">AnyCPU</Platform>

<ProjectGuid>{21E95439-7A66-4C75-ACC5-1B9A5FF4A32D}</ProjectGuid>

<OutputType>Library</OutputType>

<AppDesignerFolder>Properties</AppDesignerFolder>

<RootNamespace>MyProject.Clients</RootNamespace>

<AssemblyName>MyProject.Clients</AssemblyName>

<TargetFrameworkVersion>v4.6.1</TargetFrameworkVersion>

<FileAlignment>512</FileAlignment>

<TargetFrameworkProfile />

</PropertyGroup>

Investigating the .csproj files I noticed that there is 1 instance of the <TargetFrameworkVersion> element that contains the .NET version. When I change it, in Visual Studio the .NET version property is effectively changed. So this is easy: using Notepad++ I replace this in all *.csproj files and recompile everything. This works but …

What about the NuGet packages?

The packages that I created work for .NET 4.5.2, but now we’re at .NET 4.6.1. So this is at least not optimal, and it will possibly not link properly together. So I want to update the NuGet packages to contain both versions. That way developers who are still at 4.5.2 with their solutions will use this version automatically, and developers at 4.6.1 too. Problem solved. But how …

Can this be automated?

Creating the basic NuGet package

This is quite good explained on the nuget.org website. These are the basic steps:

Technical prerequisites

Download the latest version of nuget.exe from nuget.org/downloads, saving it to a location of your choice. Then add that location to your PATH environment variable if it isn’t already.

Note: nuget.exe is the CLI tool itself, not an installer, so be sure to save the downloaded file from your browser instead of running it.

I copied this file to C:\Program Files (x86)\Microsoft Visual Studio 14.0\Common7Tools, which is already in my PATH variable (Developer command prompt for VS2015). So now I have access to the CLI from everywhere, provided that I use the Dev command prompt of course.

So now we can use the NuGet CLI, described here.

Nice to have

https://github.com/NuGetPackageExplorer/NuGetPackageExplorer

From their website:

NuGet Package Explorer is a ClickOnce & Chocolatey application that makes it easy to create and explore NuGet packages. After installing it, you can double click on a .nupkg file to view the package content, or you can load packages directly from nuget feeds like nuget.org, or your own Nuget server.

This tool will prove invaluable when you are trying some more exotic stuff with NuGet.

It is also possible to change a NuGet package using the package explorer. You can change the package metadata, and also add content (such as binaries, readme files, …).

Prerequisites for a good package

An assembly (or a set of assemblies) is a good candidate to be a package when the package has the least dependencies possible. For example a logging package would only do logging, and nothing more. Like that NuGet packages can be used everywhere, without special conditions. When dependencies are necessary, then they are preferably on other NuGet packages.

Creating the package

In Visual Studio, create a project of your choice. Make sure that it compiles well.

Now open the DEV command prompt and enter

nuget spec

in the folder containing your project file. This will generate a template .nuspec file that you can use as a starting point. This is an example .nuspec file:

<?xml version="1.0"?> <package xmlns="http://schemas.microsoft.com/packaging/2013/05/nuspec.xsd"> <metadata> <!-- The identifier that must be unique within the hosting gallery --> <id>Diagnostics.Logging</id> <!-- The package version number that is used when resolving dependencies --> <version>1.1.0</version> <!-- Authors contain text that appears directly on the gallery --> <authors>Gaston</authors> <!-- Owners are typically nuget.org identities that allow gallery users to early find other packages by the same owners. --> <owners>Gaston</owners> <!-- License and project URLs provide links for the gallery --> <!-- <licenseUrl>http://opensource.org/licenses/MS-PL</licenseUrl> <projectUrl>http://github.com/contoso/UsefulStuff</projectUrl> --> <!-- The icon is used in Visual Studio's package manager UI --> <!-- <iconUrl>http://github.com/contoso/UsefulStuff/nuget_icon.png</iconUrl> --> <!-- If true, this value prompts the user to accept the license when installing the package. --> <requireLicenseAcceptance>false</requireLicenseAcceptance> <!-- Any details about this particular release --> <releaseNotes>Added binaries for .NET 4.6.1</releaseNotes> <!-- The description can be used in package manager UI. Note that the nuget.org gallery uses information you add in the portal. --> <description>Logging base class </description> <!-- Copyright information --> <copyright>Copyright ©2017</copyright> <!-- Tags appear in the gallery and can be used for tag searches --> <tags>diagnostics logging</tags> <!-- Dependencies are automatically installed when the package is installed --> <dependencies> <!--<dependency id="EntityFramework" version="6.1.3" />--> </dependencies> </metadata> <!-- A readme.txt will be displayed when the package is installed --> <!-- <files> <file src="readme.txt" target="" /> </files> --> </package>

Now run

nuget pack

in your project folder, and a Nuget package will be generated for you.

Verifying the package

If you want to know if the contents of your package are correct, use Nuget Package Explorer to open your package.

Here you see a package that I created. It contains some meta data on the left side, and the package in 2 versions on the right side. You can use this tool to add more folders and to change the meta data. This is good and nice, but not very automated. For example, how can we create a Nuget package like this one, that contains 2 .NET versions of the libraries?

Folder organization

I wanted to separate the creation of the package from the rest of the build process. So I created a NuGet folder in my project folder.

I moved the .nuspec file into this folder, to have a starting point and then I created a batch file that solved the following problems:

- Create the necessary folders

- Build the binaries for .NET 4.5.2

- Build the binaries for .NET 4.6.1

- Pack both sets of binaries in a NuGet package

I also wanted this package to be easily configurable, so I used some variables.

The script

Initializing the variables

set ProjectLocation=C:\_Projects\Diagnostics.Logging set Project=Diagnostics.Logging set NugetLocation=%ProjectLocation%\NuGet\lib set ProjectName=%Project%.csproj set ProjectDll=%Project%.dll set ProjectNuspec=%Project%.nuspec set BuildOutputLocation=%ProjectLocation%\NuGet\temp set msbuild="C:\Program Files (x86)\MSBuild\14.0\bin\msbuild.exe" set nuget="C:\Program Files (x86)\Microsoft Visual Studio 14.0\Common7\Tools\nuget.exe"

The 2 first variables are the real parameters. All the other variables are built from these 2 variables.

The %msbuild% and %nuget% variables allow running the commands easily without changing the path. Thanks to these 2 lines this script will run in any “DOS prompt”, not just in the Visual Studio Command Prompt.

Setting up the folder structure

cd /d %ProjectLocation%\NuGet md temp md lib\lib\net452 md lib\lib\net461 copy /Y %ProjectNuspec% lib copy /Y readme.txt lib

In my batch file I don’t want to rely on the existence of a specific folder structure, so I create it anyway. I know that I can first test if a folder exists before trying to create it, but the end result will be the same.

In my batch file I don’t want to rely on the existence of a specific folder structure, so I create it anyway. I know that I can first test if a folder exists before trying to create it, but the end result will be the same.

Notice that I created Lib\Lib. The first level contains the necessary “housekeeping” files to create the package, the second level will contain the actual content that goes into the package file. The 2 copy statements copy the “housekeeping” files.

Building the project in the right .NET versions

%msbuild% "%ProjectLocation%\%ProjectName%" /t:Clean;Build /nr:false /p:OutputPath="%BuildOutputLocation%";Configuration="Release";Platform="Any CPU";TargetFrameworkVersion=v4.5.2 copy /Y "%BuildOutputLocation%"\%ProjectDll% "%NugetLocation%"\lib\net452\%ProjectDll% %msbuild% "%ProjectLocation%\%ProjectName%" /t:Clean;Build /nr:false /p:OutputPath="%BuildOutputLocation%";Configuration="Release";Platform="Any CPU";TargetFrameworkVersion=v4.6.1 copy /Y "%BuildOutputLocation%"\%ProjectDll% "%NugetLocation%"\lib\net461\%ProjectDll%

The secret is in the /p switch

When we look at a .csproj file we see that there are <PropertyGroup> elements with a lot of elements in them, here is an extract :

<?xml version="1.0" encoding="utf-8"?> <Project ToolsVersion="12.0" DefaultTargets="Build" xmlns="http://schemas.microsoft.com/developer/msbuild/2003"> <Import Project="$(MSBuildExtensionsPath)\$(MSBuildToolsVersion)\Microsoft.Common.props" Condition="Exists('$(MSBuildExtensionsPath)\$(MSBuildToolsVersion)\Microsoft.Common.props')" /> <PropertyGroup> <!-- … --> <OutputType>Library</OutputType> <!-- … --> <TargetFrameworkVersion>v4.6.1</TargetFrameworkVersion> <!-- … --> </PropertyGroup>

Each element under the <PropertyGroup> element is a property that can be set, typically in Visual Studio (Project settings). So compiling for another .NET version is as simple as changing the <TargetFrameworkVersion> element and executing the build.

But the /p flag makes this even easier:

%msbuild% "%ProjectLocation%\%ProjectName%"

/t:Clean;Build /nr:false

/p:OutputPath="%BuildOutputLocation%";Configuration="Release";Platform="Any CPU";TargetFrameworkVersion=v4.5.2

In this line MSBuild is executed, and the properties OutputPath, BuildOutputLocation, Release, Platform and TargetFrameworkVersion are set using the /p switch. This makes building for different .NET versions easy. You can find more information about the MSBuild switches here.

So now we are able to script the compilation of our project in different locations for different .NET versions. Once this is done we just need to package and we’re done!

cd /d “%NugetLocation%”

%nuget% pack %ProjectNuspec%

Conclusion

We automated the creation of NuGet packages with an extensible script. In the script as much as possible is parameterized so it can be used for other packages as well.

It is possible to add this to your build scripts, but be careful to not always build and deploy your NuGet packages when nothing has changed to them. This is a reason that I like to keep the script handy and run it when the packages are modified (and only then).

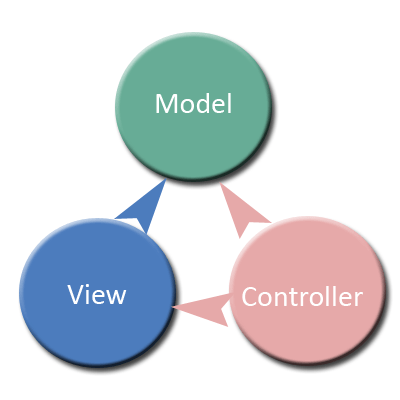

rn typically used for creating web applications. Of course it can be (and is) applied for other types of applications as well. In this article I only talk about APS.NET MVC.

rn typically used for creating web applications. Of course it can be (and is) applied for other types of applications as well. In this article I only talk about APS.NET MVC. I only give a short introduction, to make sure that we’re all on the same page.

I only give a short introduction, to make sure that we’re all on the same page.

Wikipedia has

Wikipedia has